One of the early applications of AI in medicine has been the widespread adoption of early warning systems integrated into ongoing hospitalization. These systems “surveil” various datasets and notify physicians and nurses when something is amiss or may be heading in that direction. The system being studied in this report is from Epic, their Epic Deterioration Index (EDI).

EDI uses a previously “vetted” model using 31 clinical measures captured in hospital electronic records. Every 15 minutes, an EDI score of how likely a given patient will need “an escalation of care” within the next 6 to 18 hours is calculated. Those escalations include:

- Activation of a rapid response team – a specialized group that goes to the patient’s bedside to assess whether they require more intensive care.

- Transfer to an Intensive Care Unit

- Cardiopulmonary arrest

- Death

The score ranges from 0 to 100, with higher scores correlating with higher risk.

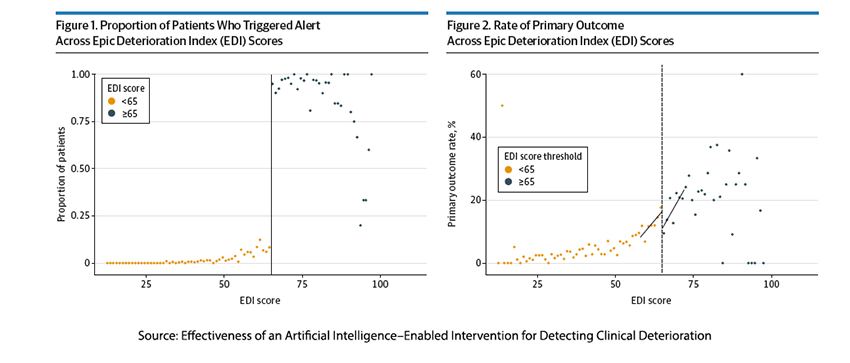

Because we are dealing with human lives, the ethical hurdles of a randomized controlled trial led the researcher to use a quasi-experimental regression discontinuity design (RDD) model. It hinges on a clear cutoff point of a continuous variable, in this instance, the EDI score of 65. It assumes that individuals on either side of that cutoff are essentially similar and that any observed difference is attributable to treatment. The difference is measured using regression lines on either side of the cutoff, where any difference in the regression lines is a measure of the treatment difference.

While the researchers took pains in establishing their cutoff, in clinical application, it may be more fuzzy than clear, making for an acknowledged weakness in the study. For this study, a score of 65 was chosen as the cutoff value. When the EDI score rose to 65 or more, the patient’s nurse and physician received an automated alert “to initiate a collaborative workflow … to assess for potential reasons for clinical deterioration.” This leads to a second limitation of the study because medical teams were not required to take any specific clinical actions. As a result, their findings describe a “treatment” that includes not just a specific EDI scoring and cutoff but an alert system and action plan. So, while “success” might be attributable to the EDI, failure will “have many fathers.”

The outcome of interest was how many individual escalations of care were avoided and as a composite of all four escalations. [1] All patients admitted to four medical units between January 2021 and November 2022 were followed – just shy of 10,000 admissions. 10% of those admissions, 963 patients with a median age of 76, had EDI scores within 7 points of the 65-point cutoff and were the dataset. The patients within that 14-point band were relatively evenly matched for age and co-morbidities, although a bit more of those patients with scores greater than 65 had been admitted for respiratory problems or infections.

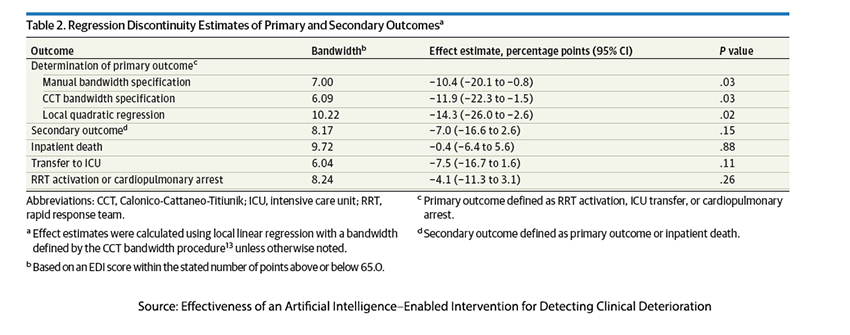

The use of EDI to notify physicians and nurses of a potential escalation of care reduces the need for such escalations by a statistically significant 10%. The composite demonstrated an almost statistically significant reduction in overall escalations by 7%. From the absolutist view, did the warning save lives? The answer is no, reducing deaths by a clinically and statistically insignificant 0.4%. From an administrative viewpoint, it might have reduced costs associated with more extended stays and ICU rather than ward care.

Before we draw any more conclusions, it may be worth looking at a visualization of the data. On the left, we see the number of patients triggering alerts; on the right, we see those who experience an escalation of care. For those patients with <65 scores, there are a “significant” number of escalations – the cutoff was insensitive to their plight; they were the false negatives. On the other hand, did patients with >65 scores have better outcomes because of the alert, or did they have more false positives? It is hard to tell not knowing whether any intervention was warranted or considered. While we may ascribe the reductions in escalation to alerts, it might be that the cutoff was overly sensitive. That is the inherent weakness of the design the researcher chose to use. I do not fault their choice; it was undoubtedly an ethically better design, but as with all models, it simplifies.

The other great weakness is we have no information about whether any interventions were made, only that providers were alerted. Alerts without actionable information and subsequent action will, over time, be no more important than the boy crying wolf or Chicken Little noting that the sky is falling.

The path from theory to practice is often fraught with thorns of skepticism and mirages of false hope. With its compelling promise of preemptive intervention, EDI beckons us toward a future where calamity can be averted with the tap of a touchscreen. Yet, as we scrutinize the data and dissect the design, was it truly the EDI's vigilance that spared lives or merely the caprice of chance? That will require “more research.”

[1] Including the composite of all four escalations as a separate outcome can create a possibly significant effect when all of the outcomes, individually are not. It is a frequently used metric witness the composite Major Adverse Cardiovascular Event (MACE), including myocardial infarction, stroke, cardiovascular death, and unstable angina

Source: Effectiveness of an Artificial Intelligence–Enabled Intervention for Detecting Clinical Deterioration JAMA Internal Medicine DOI:10.1001/jamainternmed.2024.0084