An often toxic mixture of snark and narcissism, Twitter usually does not bring out the best in humanity. Amidst all the noise, however, scientists are searching for a useful signal: A predictor of mental illness.

People who use social media reveal an awful lot about themselves. Much of the time, this is purposeful, as users share their political opinions and religious beliefs. But they also could be revealing things of which they are completely unaware, such as a deterioration in their mental health. Subtle alterations in the way a person communicates on Twitter could reveal the onset of conditions such as depression or post-traumatic stress disorder (PTSD).

An interdisciplinary team of American scientists analyzed Twitter data from 105 patients with clinically diagnosed depression and 99 healthy controls. The team's goal was to determine, using a supervised learning algorithm, if they could detect telltale changes in language that serve as potential markers of depression. Indeed, they could.

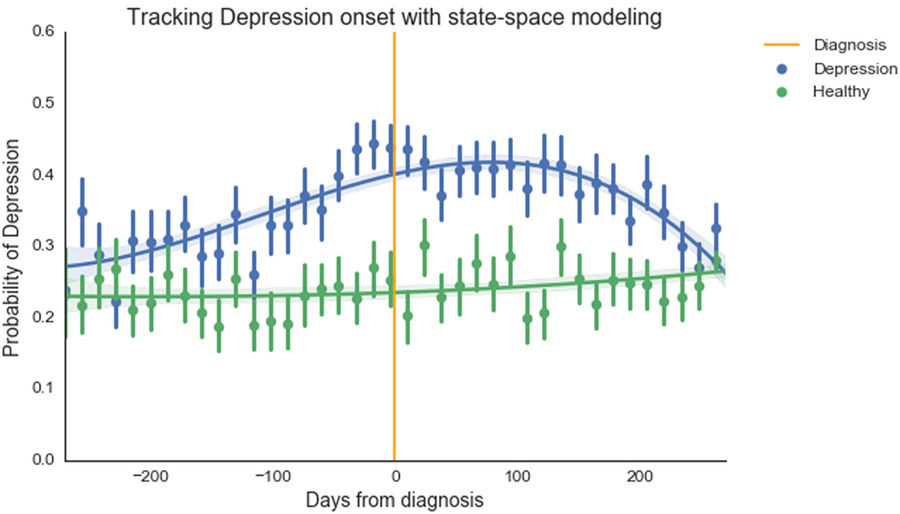

Depressed people tended to use more negative words like no, never, and death and fewer positive ones like happy and photo. Additionally, the algorithm detected signs of depression 100 to 200 days before the depressed patients were clinically diagnosed, which means that their algorithm could serve as a predictive tool.

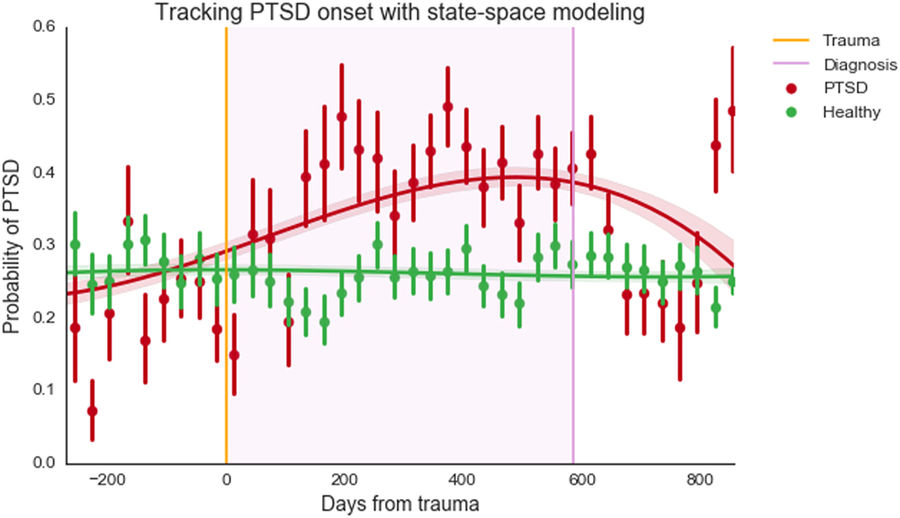

On a separate group of individuals (111 who were healthy and 63 who had PTSD), the authors tested their algorithm to predict PTSD. Immediately following the traumatic event, their algorithm detected a noticeable change in Twitter behavior among those suffering PTSD.

If the algorithm gave a diagnosis of PTSD, it was right roughly 90% of the time. (That's a screening characteristic known as positive predictive value (PPV) or precision.)* By comparison, general practitioners have a PPV of only about 50%.

The study has at least two shortcomings. First, though a PPV of 90% sounds great, in reality, the PPV is much lower. This is due to an inherent limitation in the design of case-control studies. These studies are conducted on a preselected ratio of healthy and unhealthy people (often around 1:1), a ratio that is certainly not true for the general population. Because of that, it is impossible using data from this research alone to arrive at a reliable figure for false positives, which means the reported PPV of ~90% is inflated.

Second, the tool is of limited use for people who don't use social media. However, it is easy to imagine this algorithm being helpful for monitoring text messages between therapists and their patients.

The authors, therefore, believe they have created a tool to screen people for signs of mental illness. Perhaps some greater good may come from Twitter, after all.

*Note: The sensitivity (also known as recall), which gives the probability that the algorithm will correctly identify a person given that he has PTSD, was 70%.

Source: Andrew G. Reece, Andrew J. Reagan, Katharina L. M. Lix, Peter Sheridan Dodds, Christopher M. Danforth, and Ellen J. Langer. "Forecasting the onset and course of mental illness with Twitter data." Scientific Reports 7, Article number: 13006. Published: 11-Oct-2017. doi: 10.1038/s41598-017-12961-9