There are any number of algorithmic risk calculators that combine a host of measurables to predict our risk of dying from “all-causes” to more specific cardiovascular deaths. Many use some conjoiner of body weight, frequently the oft-maligned body mass index (BMI), to get at our overall proportion of fat, muscle, and bone. Dual-energy X-ray Absorptiometry or DXA scans are a simple, non-invasive means of looking at our body composition. [1] While they are often used to calculate bone density screening for osteoporosis, a whole body DXA (TBDXA) shows and estimates our lean tissue, fat, and bone mineral content.

Those quantified estimates are, in turn, often used as metrics or ratios to further refine the understanding of our body composition. In quantifying the image to get those metrics and ratios, we lose information that we cannot easily quantify or describe, information that artificial intelligence, a champion at pattern recognition, may readily identify. An essential feature of the current study is that the data were the images themselves, not our abstractions. Of course, in many instances, what AI identifies remains a black box mystery to us, making its predictions less open to our inspection and more in keeping with its oracular roots.

The current study looks at the predictive ability of TXBDA in conjunction with readily available clinical information for determining mortality. The dataset was Health ABC, a prospective cohort study of 3075 participants aged 70 to 79 years at the time of recruitment, without difficulty walking a quarter mile or climbing 10 steps, without treatment for cancer within three years, and able to perform their activities of daily living (ADL) – basically well individuals with the usual aches and pains of the 70s. It was roughly equal for gender, 42% Black, 58% White with 58% self-reporting heart disease, 21% reporting respiratory disease, 15% with diabetes, and 19% with a history of cancer.

Participants were followed for 16 years and were seen at regular intervals to be examined. [2] Attempts were made to complete 8 TXDXA scans over the interval. 1992 deaths occurred during follow-up. 70% of the data was used to train the AI algorithm, and 20% of the data was used as the test set – what is being reported.

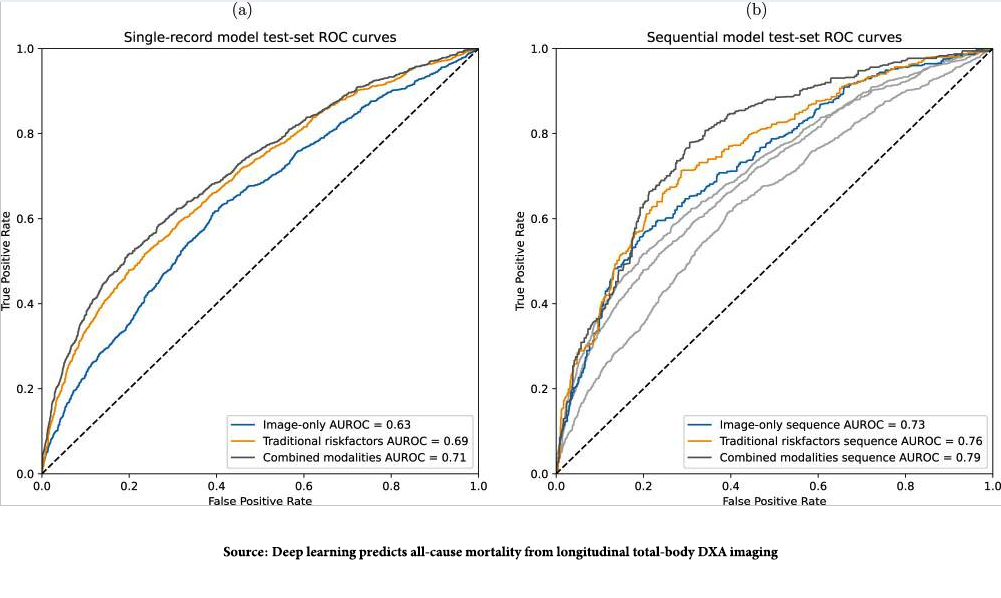

The images show the “area under the receiver operator curve,” measuring the model’s accuracy. The dotted line, the AUROC of 0. 5, is consistent with a coin toss, a 50-50 chance of being correct. The greater the curve is to the left, the better the model’s predictive ability. There are two noteworthy points. First, the combination of imaging data from the TXDXA and the classical risk factors we collect most accurately predicted deaths. Second, the graph to the right is the AUROC findings when the changing, longitudinal TXDXA, and clinical data model was evaluated, and it was even more accurate in predicting those deaths. That makes sense; the snapshot often fails to convey what the movie really shows.

The images show the “area under the receiver operator curve,” measuring the model’s accuracy. The dotted line, the AUROC of 0. 5, is consistent with a coin toss, a 50-50 chance of being correct. The greater the curve is to the left, the better the model’s predictive ability. There are two noteworthy points. First, the combination of imaging data from the TXDXA and the classical risk factors we collect most accurately predicted deaths. Second, the graph to the right is the AUROC findings when the changing, longitudinal TXDXA, and clinical data model was evaluated, and it was even more accurate in predicting those deaths. That makes sense; the snapshot often fails to convey what the movie really shows.

“This study demonstrates that combining full TBDXA scans and traditional mortality risk factors results in stronger mortality prediction models than using either modality on its own. The results presented here also show that the progression of body composition and health markers over time can be leveraged to further improve mortality prediction.”

The researchers attempted to pry open the black box of prediction using what they referred to as ablation studies, where they denied the training set differing information to see how it might degrade performance. In the longitudinal model, ADL disability status, fasting serum glucose, and walking speeds for long and medium distances significantly reduced the predictive ability. This certainly suggests that staying active and maintaining metabolic control are markers of enhanced longevity. [3]

As with all algorithms based on AI learning, there are biases. First, the algorithm performed better (more accurately) for White participants, possibly because the data was weighted toward that 58% over the 42% of Black data. More interesting is the fact that the algorithm performed best in identifying cardiovascular deaths. As the researchers write,

“This observation aligns well with the existence of body-composition-related factors contributing to cardiovascular disease, information that would be available to the model through both the TBDXA and metadata variables.”

This also may explain why the algorithm was better at identifying male deaths, as male body composition effect on mortality is “more pronounced in men than in women.”

Finally, I would fail in my writing if I did not acknowledge Dr. Peter Attia’s article on this study. He remains a constant source of serendipitous learning for which I am grateful, and hopefully, my readers are the beneficiaries.

[1] The radiation dose from a total body DXA scan is minimal, equivalent to about 1-5 microsieverts (µSv). For context, a mammogram delivers 400 microsieverts, and the average background radiation from natural sources (such as cosmic rays and radioactive substances in the earth) is around 3,000 µSv per year.

[2] Clinical data included race, sex, age, height, weight, BMI, blood glucose, fasting glucose, blood insulin, fasting insulin, hemoglobin A1c, interleukin 6, general indicators of fitness (walking speed and grip strength), and self-reported disability for walking, stair, and ADLs.

[3] It is somewhat ironic that this AI discovery tells us something we have been told by our mothers and grandmothers, “eat right and be active.”

Source: Deep learning predicts all-cause mortality from longitudinal total-body DXA imaging Communications Medicine DOI: 10.1038/s43856-022-00166-9

Understanding the Relationship Between Body Composition and Mortality Using Artificial Intelligence (AI) Peter Attia MD