Patients vs. Predictive Medicine

According to a class action lawsuit filed in federal court in Minnesota, two elderly Medicare Advantage beneficiaries—one a 91-year-old man recovering from a fractured leg and ankle, the other a 74-year-old stroke patient undergoing rehabilitation—were allegedly forced out of necessary post-acute rehabilitation care after their insurance coverage was cut off by an artificial intelligence system.

The plaintiffs allege that UnitedHealth Group, UnitedHealthcare, and naviHealth used an AI tool called nH Predict to estimate how long patients “should” need post-acute care, and then ended coverage based on those estimates rather than on treating physicians’ advice. Consequently, patients were allegedly discharged too early or had to pay thousands of dollars out of pocket to continue medically necessary treatment.

Notably, the lawsuit does not argue that the algorithm itself was a defective product. Instead, it claims the companies improperly relied on it to make coverage decisions that should have been assessed individually under Medicare Advantage rules. The complaint claims that the defendants acted in “bad faith” by essentially delegating their responsibility to review claims to the nH Predict model rather than exercising independent medical judgment.

The lawsuit further claims that many denials, reportedly as many as 90% in some cases, were later overturned on appeal. Plaintiffs argue this shows that the system produced systematically incorrect decisions and that the companies either knew or should have known the tool was unreliable. However, appeal reversals do not perfectly match statistical “error rates,” a distinction that becomes important later.

Just Say No

One overlooked aspect of prior authorizations and payment denials is the power of no. Only a small portion of denied prior authorization requests were appealed in 2024, even though 80% of appeals are partially or fully overturned. This raises a clear question: if denials are rarely appealed, how much influence do automated systems have on the initial decision?

Inside the Black Box of nH Predict

As described by ArsTechnica:

“It’s unclear how nH Predict works exactly, but it reportedly estimates post-acute care by pulling information from a database containing medical cases from 6 million patients. NaviHealth case managers plug in certain information about a given patient—including age, living situation, and physical functions—and the AI algorithm spits out estimates based on similar patients in the database. The algorithm estimates medical needs, length of stay, and discharge date.”

Like many proprietary algorithms, the internal workings are unknown, and there are no public statistics on the model's accuracy. [1]

The 90% error rate reflects appeals that were reversed either internally or by a federal administrative law judge. [2] Reversal rates on appeal don’t directly correspond to statistical false positive or false negative rates, but in this case, a denial that is later reversed is roughly similar to a false negative outcome. The true standard, as the lawsuit argues, is ultimately based on human-driven legal coverage standards, not on the model’s dataset. The algorithm, developed by naviHealth, a co-defendant and wholly owned subsidiary of UnitedHealth, was “specifically intended for it to save insurance companies money in the post-acute setting, which had previously been a highly unprofitable aspect of Medicare Services,” using “rigid criteria” and “unrealistic predictions.” Interestingly, while the original complaint emphasized the algorithm itself, the current amended complaint is more focused on the lack of human oversight.

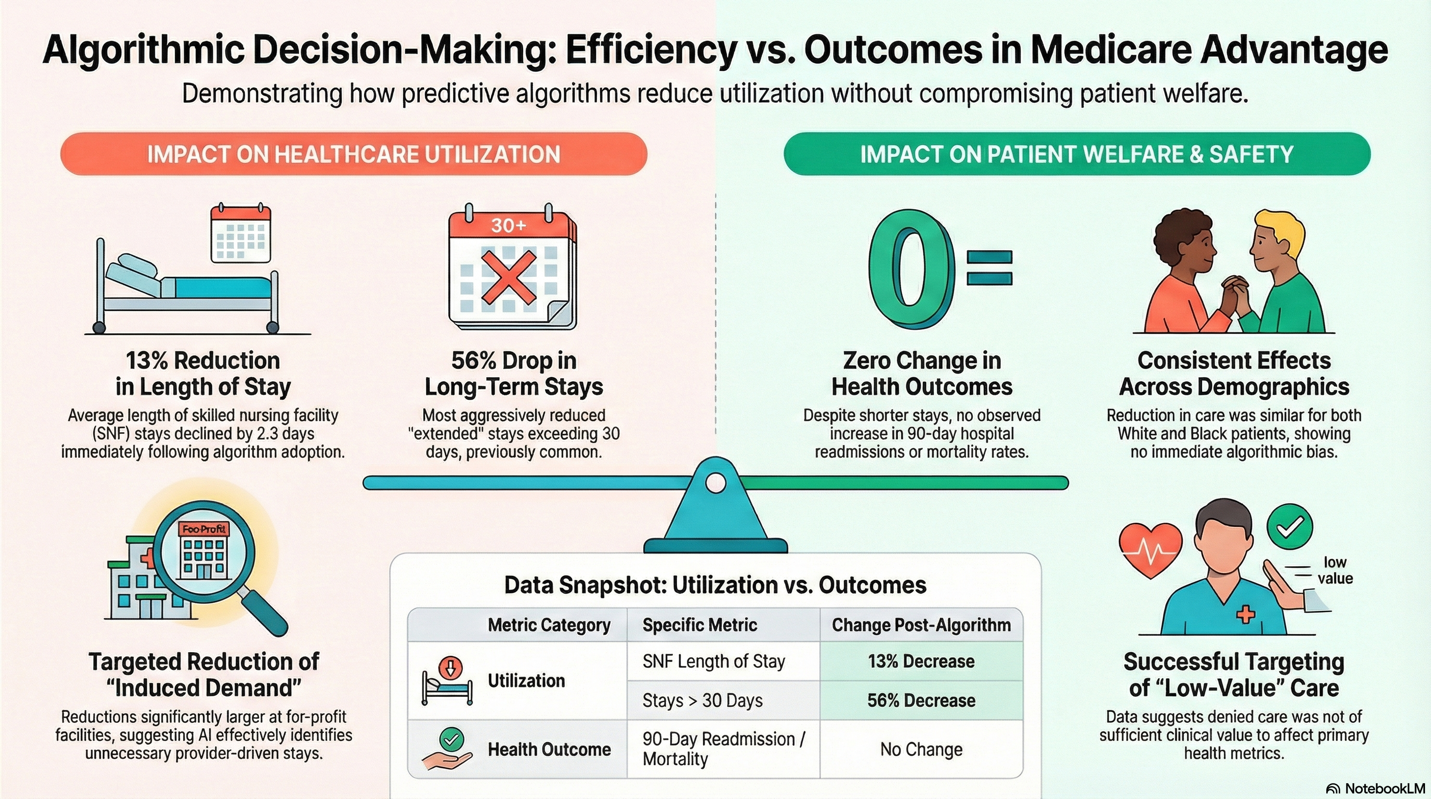

There is one peer-reviewed study on the impact of nH Predict that found the algorithm reduced post-acute care coverage by 13%, mainly due to longer stays in skilled nursing facilities (SNFs). Specifically,

- The reductions did not significantly affect the initial likelihood of a patient being discharged to a SNF; instead, they influenced the duration of patients' stays.

- Stays longer than 30 days experienced a significant drop of 7.1 percentage points, which is a 56% decrease for longer-term stays.

- The declines in length of stay were more pronounced for patients admitted to for-profit SNFs, suggesting the algorithm effectively identified "induced demand" in settings where providers have greater incentives to extend stays.

The author found no worsening outcomes, i.e., increased mortality or readmissions, concluding that his research “demonstrates that using an algorithm in this way can reduce the use of relatively low-value care.”

Bug or Feature?

If the algorithm results in a high rate of overturned denials, could that be an intentional design tradeoff —prioritizing to reduce false negatives (missed opportunities for cost savings) even if it leads to more false positives (wrongful denials)?

In any screening system, you can adjust decision thresholds to reduce false negatives or false positives, but not both simultaneously. So, cost containment can be a form of optimization. However, there is a fiduciary responsibility in the contract and with Medicare to “accurately apply Medicare coverage standards and medical necessity.”

Deny first, Correct later

From a legal perspective, it is acceptable to cast a wide net, accepting more false positives (and wrongful denials), as long as there is meaningful individualized review. However, if correction occurs only after harm has been done and care is cut off, it ceases to function as a screening tool and becomes a gatekeeper. Additionally, a model calibrated to overshoot denials, knowing most patients won’t appeal, becomes a bad-faith action rather than a statistical issue.

However, cost containment and medical necessity are not inherently inconsistent goals.

Medicare Advantage, as a regulated health insurer, is expected to prevent fraud, waste, and abuse, and CMS explicitly permits utilization management and prior authorization. Can we then argue that UnitedHealthcare is fulfilling its fiduciary duties to the taxpayer?

Ideally, we could examine the algorithm’s inner workings. A cost-savings model might lower approval thresholds, reduce the acceptable range of length of stay, and use more general and fewer more specific patient averages. A fraud identifier might detect abnormal patterns in physician behavior, billing, and coding by comparing similar networks and patients. That information is not available, hidden behind a “proprietary” veil and opaque “machine learning.”

We might employ more empirical measures. After deployment of the algorithm:

- Did denial rates spike? [3]

- Did appeal overturn rates spike?

- Did patient outcomes worsen?

- Did hospital readmission rates increase?

- Did post-acute mortality change?

While philosophically, cost-containment and medical necessity are compatible goals, the law requires that determinations of medical necessity be individualized and consistent with Medicare standards. That will be the Achilles’ heel of the defendants. In overriding the judgment of treating physicians and failing to document individualized consideration, it seems the goal was cost containment rather than detection of fraud or waste.

The dispute centers on two difficult-to-identify factors: the model’s objective function and its validation data. The impact on outcomes and the structure and behavior of human oversight are more obvious and explain why the complaint has shifted from focusing on the algorithmic tool itself to the tool's users.

Algorithmic Blame or Human Accountability

Ultimately, the litigation reflects a significant shift in the assignment of responsibility. Earlier critiques focused on the algorithm itself, but the amended complaint instead frames the issue as one of human oversight and governance. The plaintiffs contend that the wrongdoing is not an algorithmic tool but in the defendants’ choice to rely on it as a substitute for personalized medical judgment. In this view, the core failure is institutional: humans built the system, adjusted its incentives, and determined how rigorously, or loosely, to supervise it.

This shift in focus is significant. Machine learning tools are now widely used in healthcare administration, covering areas like utilization management, billing audits, fraud detection, and coverage decisions. As these systems increasingly move from administrative tasks into clinical decision-making, the risks will escalate. The legal and ethical questions will no longer be about whether algorithms help control costs or reduce paperwork, but about whether institutions keep real human accountability. If human oversight becomes merely symbolic while algorithmic outputs silently determine care and ultimately outcomes, disputes like this could be early signs of a much larger problem in integrating AI into medicine.

[1] UnitedHealth has denied requests by patients or physicians to see the algorithm’s output or internal documentation

[2] There was an additional 80% reversal of denied prior authorizations

[3] The Health and Human Services Inspector General found that among Medicare Advantage organizations, 13% of prior authorization denials met Medicare coverage rules, meaning they were wrongly denied services. The algorithm’s error rate was 80%. They also found that 18% of payment denials met both Medicare coverage rules and Medicare Advantage billing rules, meaning those claims should have been paid; the algorithm wrongly denied 90%. A previous report had found that Medicare Advantage organizations overturned approximately 75% of their own denials.