Lego is the world’s largest toy company, and it has built that dominance without screens. But even this analog icon is leaning into intelligence — not by abandoning its roots, but by augmenting them.

“I’m deep inside Lego’s headquarters in Billund, Denmark, in a private office that’s part of the company’s Creative Play Lab. The secretive division of 237 staff based here and in London, Boston, and Singapore is dedicated to thinking up what comes next for the world’s largest toy brand. … In front of me, on a plain white table, is a batch of prototypes of Lego’s new Smart Brick, the final version of which is a small, sensor-laden 2-by-4 black brick with a big brain.”

The question isn’t whether AI will enter childhood but whether it can do so while preserving the spirit of imaginative play. Read the story of its development and the dedication to keeping it LEGO loyal. From Wired, An Inside Look at Lego’s New Tech-Packed Smart Brick

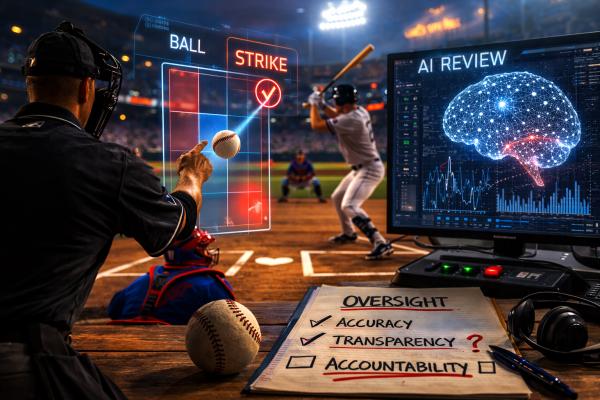

If Lego represents AI’s careful insertion into creativity, baseball shows its incursion into tradition. An American pastime long governed by human judgment is now experimenting with algorithmic oversight.

“The call of an umpire has always been incontestable. There is no appeal process. So why do players and managers argue with umpires? I expect that it is in the hope that the umpire sees the expression of grievances as constructive advice for improvement. …

The MLB implementation of ABS [Automated Ball-Strike System] will serve as an appeal system of the umpires’ decisions.”

AI is not replacing the human outright but standing behind him, quietly auditing authority. The strike zone becomes data; discretion becomes reviewable. From The New Atlantis, You Can’t Work the Robo-Ref

AI arrives first as an assistant, enhancer, or backstop. But when the stakes shift from play to war, the questions sharpen considerably. With drone-surveilled kill zones in Ukraine giving off a definite Sky Net Terminator vibe, and now a war with Iran, AI is infrastructure. Who, then, decides how it is deployed? Silicon Valley’s “move fast and break things” ethos, or the deliberate pace of law and democratic oversight?

“Hegseth’s ultimatum rests on a simple premise: that the government, not private companies, should decide how it uses powerful technologies. In most cases, that principle is sound. But here it obscures two deeper problems. It minimizes the risks to domestic liberties, and it assumes a level of understanding that does not yet exist. The engineers building these systems acknowledge that they do not fully understand them, and that the models behave in ways that can be difficult to predict or control. Demanding unconditional access before those systems are ready is not an assertion of authority. It is a wager that the unknowns will not matter.”

From The Atlantic, The Real Reason Anthropic Wants Guardrails

AI companies want flexibility; governments want access; citizens want safety — and clarity. But clarity is hard to legislate when the technology evolves by the month. Tyler Cowen addresses that mismatch more directly, describing a quiet, informal détente between AI firms and the national security state — a system of implied guardrails that moves faster than Congress but lacks its transparency.

“The major AI companies keep the national security establishment apprised of the progress they are making, as has been the case with Anthropic. There is a general sense within the AI industry that if the national security authorities saw anything in the new products that was very concerning or that might undermine the national interest, they would inform the president and Congress. That would likely lead to more formal and more restrictive kinds of regulation, so the major AI companies want to show relatively safe demos and products. An informal back-and-forth enforces implied safety standards, without the involvement of formal legislation. … Another advantage of this system is that both Congress and the administrative state can be very slow to act. The AI landscape can change in just weeks, yet our federal government is used to taking years to issue laws and directives. Had we passed AI legislation in, say, 2024, today it would be badly out of date, no matter what your point of view on what such regulation should accomplish. For instance, in 2024, few outsiders were much concerned with the properties of, or risks from, autonomous AI “agents.” Today, that is the number-one topic of concern.”

From Tyler Cowen in the Free Press, In the Pentagon Battle with Anthropic, We All Lose

From Smart Bricks to robo-umps to autonomous agents and drone warfare, the through-line is authority. Who designs the systems? Who oversees them? Who gets to overrule them? The toys are getting smarter. The strike zones are getting digital. And the defense of the homeland may depend on systems we admit we do not yet fully understand.